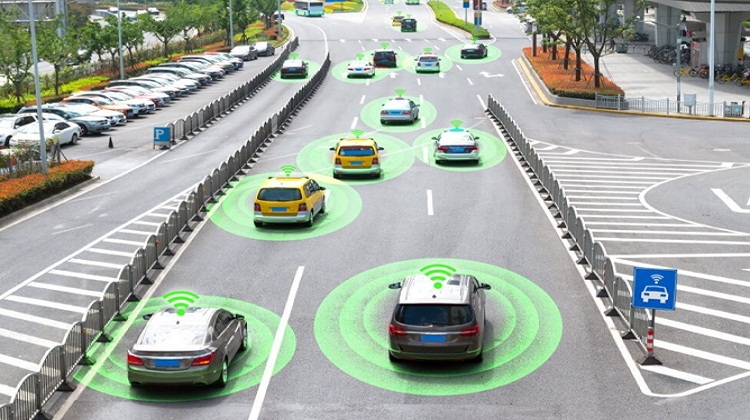

With the development of self-driving technology like robotics and autonomous vehicles, sensor data fusion has become a significant phenomenon in AI. Although sensors have been around for a while, it is now much simpler to integrate them into automatic vehicles or, for that matter, your portable digital assistant, otherwise known as your mobile. The Sensor Fusion Training Mumbai is worth your time and resources.

Machines employ a variety of sensor data for various purposes, just as people have a variety of senses. Some sensor detectors, like cameras, mimic the experience of seeing something. However, sensors may be able to see beyond what the human eye can see. It is possible for bats, marine mammals, and even certain people to locate one another via echolocation. This is what spurred the creation of ultrasonic sonar.

Three types of sensor fusion technology may be identified:

- The amount of storage, bandwidth, and processing power required for sensor fusion and how clear and precise the final model is, are all influenced by the level of abstraction utilized. When interacting with sensory input, the degree of modification at which we accomplish the integration greatly impacts us.

- When we merge information data from two distinct detectors that watch the same object, we are fusing competing or redundant data. On the other hand, comparative fusion refers to combining two sensors to produce a picture that is otherwise impossible to measure with a single sensor. Synchronized sensor data fusion is made feasible by scanning the item with several sensors concurrently. When we mix them, we gain a new perspective.

- In a centralized method, all data travels to a central processing unit. In a decentralized system, every sensor data internally mixes the raw data before sending it. After that, local decentralized databases process the data before sending it to a central point for sensor fusion.

The sensitivity to disturbances is a feature shared by all sensors. The camera might be obscured by sunlight, and an aerial could be intercepted. These circumstances could offer sensory information that is skewed, inconsistent, or just plain wrong. As a result, a signal (the subject of sensory fusion) and noise make up the majority of sensor data gathered in the real world. Sensor technologies analyze many data samples simultaneously to purge the data’s mess. The Sensor Fusion Training Hyderabad puts a great emphasis on the nitty-gritty of it all.

Much like humans, systems gain through absorbing knowledge from many sources. That is the fundamental idea of sensor fusion. Another type of data fusion, called sensor fusion, combines sensory data to reduce unpredictability and improve information by producing more informed judgments.

A distinctive use of information data fusion that has grown greatly in recent years is sensor fusion. Every technology that runs in the real world will frequently benefit from sensor technologies. This is valid for robots that have to discover new terrain, like your robot hoover.